[{"@type":"PropertyValue","name":"Data content","value":"100,000 hours of human manipulation data captured from a first-person view across real-world scenarios. Each record includes: ①time-aligned binocular video, ②binocular camera parameters, ③3D scene reconstruction point cloud file, ④human body joint data, ⑤stepwise semantic annotation file"},{"@type":"PropertyValue","name":"Collection devices","value":"PICO 4 Ultra headset worn on the head, and IMU wristbands worn on both wrists"},{"@type":"PropertyValue","name":"Data distribution","value":"real-world scenarios(like kitchen, room, hotel, etc.) that including multiple daily manipulation tasks(like food preparation & cooking, cleaning, object organizing & storage, bed making, clothing folding, etc.)"}]

{"id":2093,"datatype":"1","titleimg":"https://storage-product.datatang.com/damp/product/samplePresentation_ipad/20260424095152/1.png?Expires=4102415999&OSSAccessKeyId=LTAI5tEBeSWUJiqjXvBMsxEu&Signature=66kzdreRJ7vtvd5EgAxApIeT%2Bdw%3D","type1":"282","type1str":null,"type2":"283","type2str":null,"dataname":"100K Hours Egocentric Dataset for Embodied AI & Robot Manipulation","datazy":[{"title":"Data content","content":"100,000 hours of human manipulation data captured from a first-person view across real-world scenarios. Each record includes: ①time-aligned binocular video, ②binocular camera parameters, ③3D scene reconstruction point cloud file, ④human body joint data, ⑤stepwise semantic annotation file"},{"title":"Collection devices","content":"PICO 4 Ultra headset worn on the head, and IMU wristbands worn on both wrists"},{"title":"Data distribution","content":"real-world scenarios(like kitchen, room, hotel, etc.) that including multiple daily manipulation tasks(like food preparation & cooking, cleaning, object organizing & storage, bed making, clothing folding, etc.)"}],"datatag":"Ego-centric,Embodied-AI,First-person view","technologydoc":null,"downurl":null,"datainfo":null,"standard":null,"dataylurl":null,"flag":null,"publishtime":null,"createby":null,"createtime":null,"ext1":null,"samplestoreloc":null,"hosturl":null,"datasize":null,"industryPlan":null,"keyInformation":null,"samplePresentation":[{"name":"1.png","url":"https://storage-product.datatang.com/damp/product/samplePresentation_ipad/20260424095152/1.png?Expires=4102415999&OSSAccessKeyId=LTAI5tEBeSWUJiqjXvBMsxEu&Signature=66kzdreRJ7vtvd5EgAxApIeT%2Bdw%3D","intro":"","size":839900,"progress":100,"type":"jpg"},{"name":"2.png","url":"https://storage-product.datatang.com/damp/product/samplePresentation_ipad/20260424095152/2.png?Expires=4102415999&OSSAccessKeyId=LTAI5tEBeSWUJiqjXvBMsxEu&Signature=zRz2nQEHOHixvRuOL9ruSXwUrcA%3D","intro":"","size":375135,"progress":100,"type":"jpg"}],"officialSummary":"This large-scale egocentric dataset includes over 100,000 hours of human manipulation data captured from a first-person perspective across diverse real-world scenarios. Each data sample includes synchronized multimodal information: binocular video, camera parameters, 3D scene reconstruction point clouds, human joint tracking, and stepwise semantic annotations. It is designed to train embodied AI and Vision-Language-Action (VLA) models, enabling systems to learn from human demonstrations in realistic environments.","dataexampl":null,"datakeyword":["egocentric dataset","robot manipulation dataset","embodied AI dataset","VLA dataset","multimodal robotics dataset","first person video dataset","human pose dataset","3D reconstruction dataset"],"isDelete":null,"ids":null,"idsList":null,"datasetCode":null,"productStatus":null,"tagTypeEn":"Type","tagTypeZh":null,"website":null,"samplePresentationList":null,"datazyList":null,"keyInformationList":null,"dataexamplList":null,"bgimg":null,"datazyScriptList":null,"datakeywordListString":null,"sourceShowPage":"llm","dataShowType":"[{\"code\":\"0\",\"language\":\"ZH\"},{\"code\":\"1\",\"language\":\"ZH\"},{\"code\":\"2\",\"language\":\"EN,JP\"},{\"code\":\"3\",\"language\":\"EN\"}]","productNameEn":"100K Hours Multiple Scenes Ego-Centric Data","BGimg":"","voiceBg":["/shujutang/static/image/comm/audio_bg.webp","/shujutang/static/image/comm/audio_bg2.webp","/shujutang/static/image/comm/audio_bg3.webp","/shujutang/static/image/comm/audio_bg4.webp","/shujutang/static/image/comm/audio_bg5.webp"]}

https://www.nexdata.ai/shujutang/static/image/index/datatang_tuxiang_default.webp

[{"@type":"ImageObject","embedUrl":"https://storage-product.datatang.com/damp/product/samplePresentation_ipad/20260424095152/1.png?Expires=4102415999&OSSAccessKeyId=LTAI5tEBeSWUJiqjXvBMsxEu&Signature=66kzdreRJ7vtvd5EgAxApIeT%2Bdw%3D"},{"@type":"ImageObject","embedUrl":"https://storage-product.datatang.com/damp/product/samplePresentation_ipad/20260424095152/2.png?Expires=4102415999&OSSAccessKeyId=LTAI5tEBeSWUJiqjXvBMsxEu&Signature=zRz2nQEHOHixvRuOL9ruSXwUrcA%3D"}]

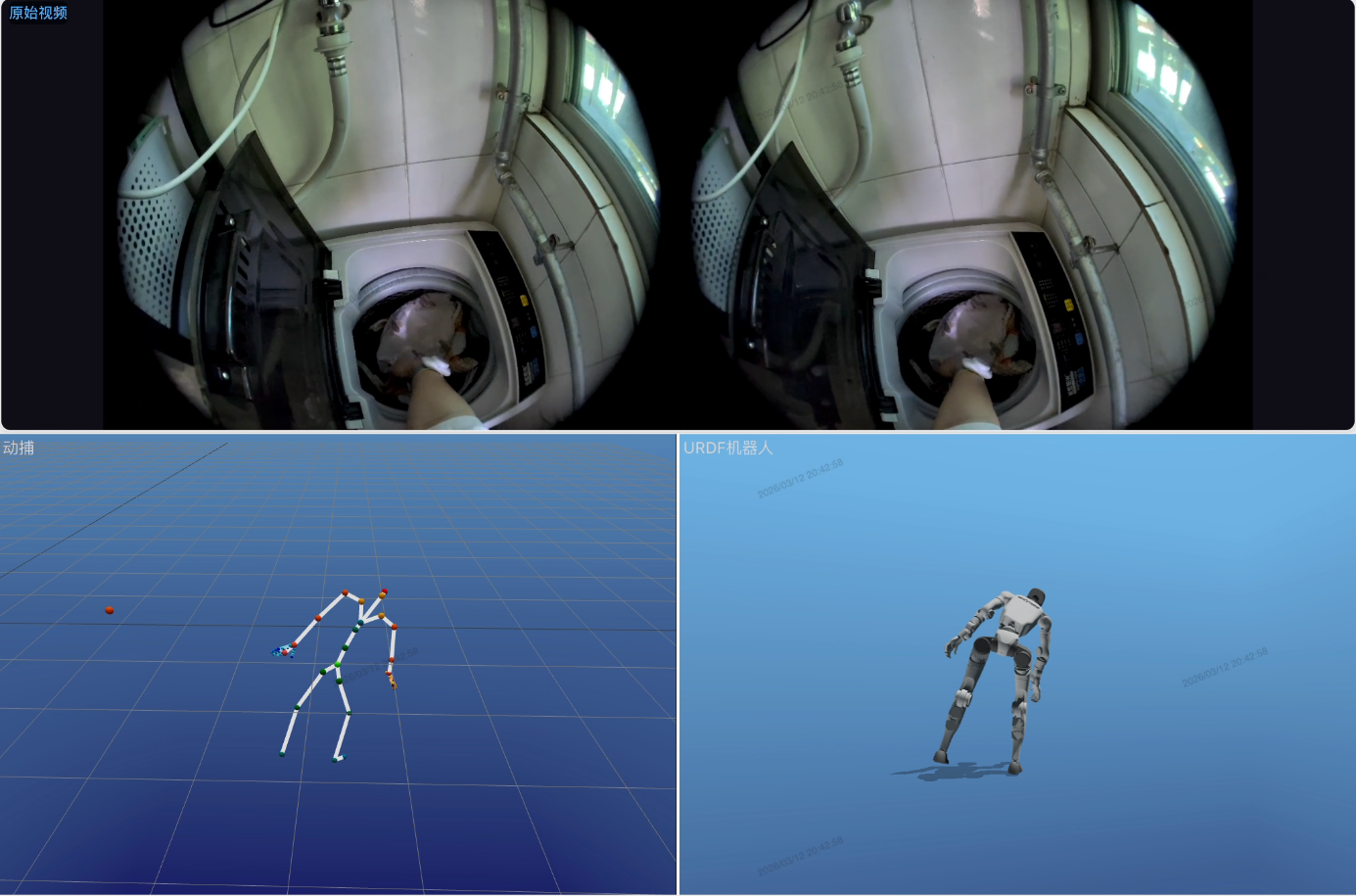

100K Hours Egocentric Dataset for Embodied AI & Robot Manipulation

egocentric dataset

robot manipulation dataset

embodied AI dataset

VLA dataset

multimodal robotics dataset

first person video dataset

human pose dataset

3D reconstruction dataset

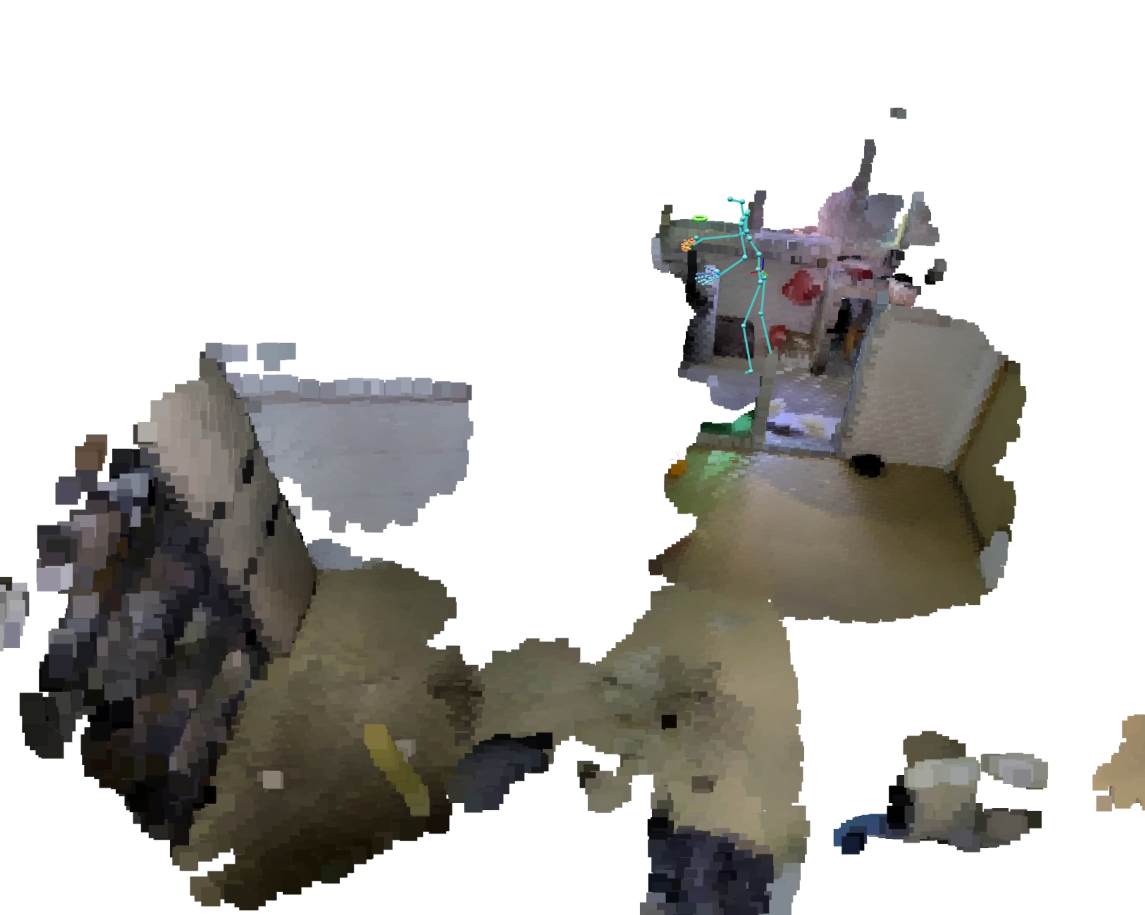

This large-scale egocentric dataset includes over 100,000 hours of human manipulation data captured from a first-person perspective across diverse real-world scenarios. Each data sample includes synchronized multimodal information: binocular video, camera parameters, 3D scene reconstruction point clouds, human joint tracking, and stepwise semantic annotations. It is designed to train embodied AI and Vision-Language-Action (VLA) models, enabling systems to learn from human demonstrations in realistic environments.

This is a paid datasets for commercial use, research purpose and more. Licensed ready made datasets help jump-start AI projects.

![Specifications]()

Specifications

Data content

100,000 hours of human manipulation data captured from a first-person view across real-world scenarios. Each record includes: ①time-aligned binocular video, ②binocular camera parameters, ③3D scene reconstruction point cloud file, ④human body joint data, ⑤stepwise semantic annotation file

Collection devices

PICO 4 Ultra headset worn on the head, and IMU wristbands worn on both wrists

Data distribution

real-world scenarios(like kitchen, room, hotel, etc.) that including multiple daily manipulation tasks(like food preparation & cooking, cleaning, object organizing & storage, bed making, clothing folding, etc.)

![Sample]()

Sample

![Recommended Datasets]()

Recommended Dataset

Tell Us Your Special Needs

705e8d2d-18c6-4067-83ce-846665bf255a

Specifications

Specifications Sample

Sample

Recommended Dataset

Recommended Dataset