➤ Open source datasets for autonomous driving

The quality and diversity of datasets determine the intelligence level of AI model. Whether it is used for smart security, autonomous driving, or human-machine interaction, the accuracy of datasets directly affect the performance of the model. With the development of data collection technology, all type of customized datasets are constantly being created to support the optimization of AI algorithm. Though in-depth research on these types of datasets, AI technology’s application prospects will be broader.

Data is as the oil in the era of artificial intelligence. With the development of the automotive industry and the implementation of autonomous driving business scenarios, autonomous driving algorithms have become particularly important. Large amount high quality data is required for autonomous driving algorithms. In this article, I share 10 open source datasets for autonomous driving models.

-

KTTI Dataset

The KITTI dataset was co-founded by Karlsruhe Institute of Technology in Germany and Toyota American Institute of Technology. It is a computer vision algorithm evaluation dataset in autonomous driving scenarios. This dataset is used to evaluate the performance of computer vision technologies such as stereo, optical flow, visual odometry, 3D object detection and 3D tracking in vehicle environments.

➤ Autonomous driving datasets

2. Waymo Open Dataset

The Waymo Open Dataset includes data collected by Waymo vehicles driving millions of miles in Phoenix, Arizona, Kirkland, Washington, Mountain View, California, and San Francisco, and covers day and night, dawn and dusk, sunny and rainy days in various cities and data collected while driving in suburban environments. The data sample is divided into 1,000 driving segments, and each driving segment continuously captures 20 seconds of driving data through sensors installed on Waymo vehicles, which is equivalent to capturing 200,000 frames of images using a 10Hz camera, which includes 5 customized versions of LiDAR and 5 front and side view cameras.

3. A2D2 Dataset

Audi’s large autonomous driving dataset A2D2. This dataset provides camera, LiDAR, and vehicle bus data, allowing developers and researchers to explore multimodal sensor fusion methods. The sensor suite includes six cameras and five LiDAR units for full 360-degree coverage. The data mainly comes from German streets, including RGB images, but also the corresponding 3D point cloud data. The recorded data is time-synchronized.

4. nuScenes Dataset

The nuScenes dataset is a public large-scale dataset for autonomous driving developed by the team at Motional. Motional is committed to enabling safe, reliable and accessible driverless environments. By releasing some of the data to the public, Motional aims to advance research in computer vision and autonomous driving.

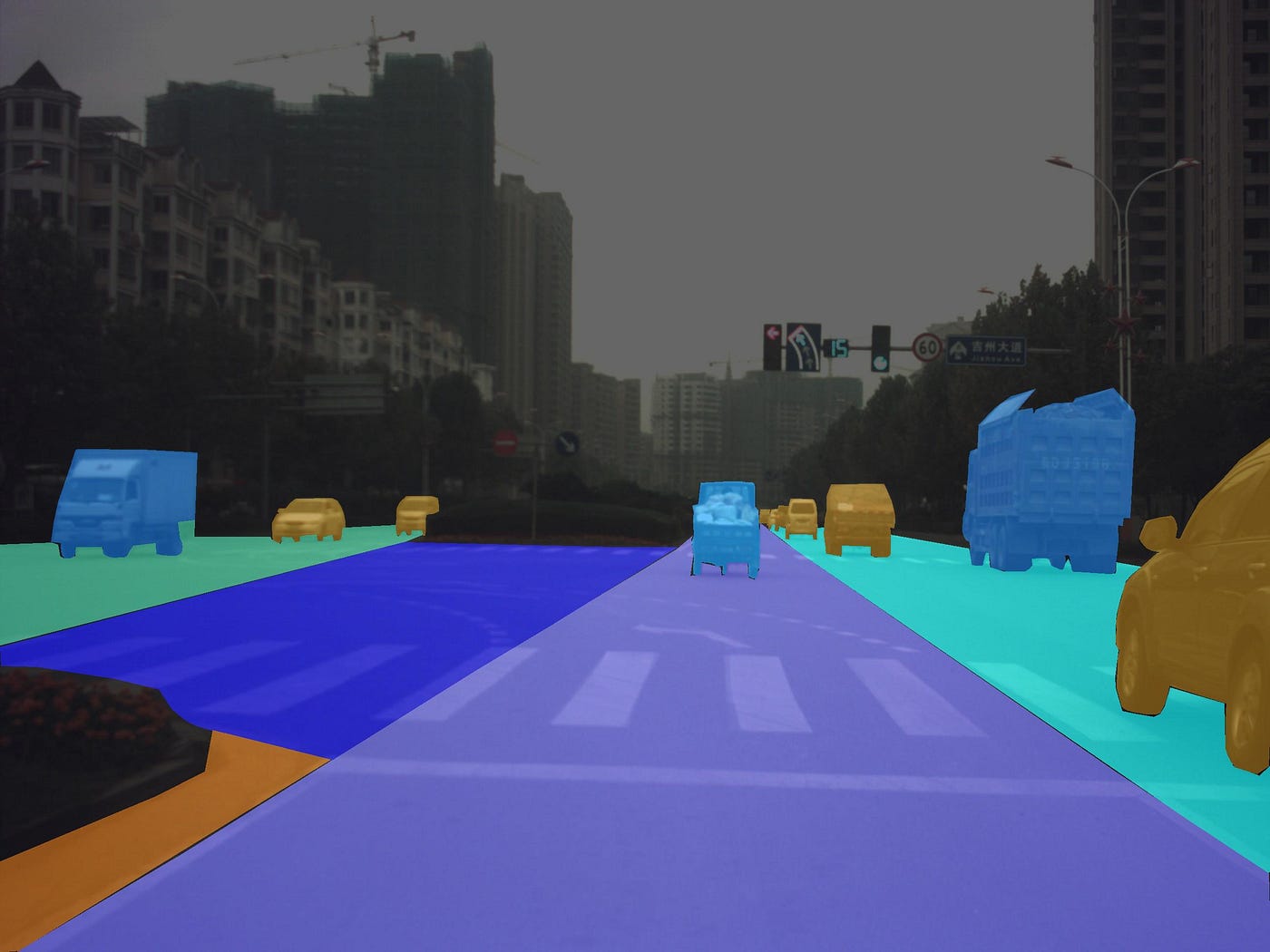

5. CityScapes Dataset

CityScapes is a public dataset jointly released by the Mercedes-Benz Autonomous Driving Laboratory, the Max Planck Institute, and Darmstadt University of Technology, focusing on the semantic understanding of urban street scenes. The dataset contains 50 different cities, various stereoscopic video sequences recorded in street scenes under different seasons and weather conditions. The Cityscapes dataset has two sets of evaluation criteria: fine and coarse. The former provides 5000 finely annotated images, the latter provides 5000 finely annotated images plus 20000 coarsely annotated images.

6. DBB100K Dataset

In May 2018, Berkeley University AI Lab (BAIR) released the public driving data set BDD100K, and designed an image annotation system at the same time. The BDD100K dataset contains 100,000 high-definition videos, each about 40 seconds\720p\30 fps. The key frame is sampled at the 10th second of each video to obtain 100,000 pictures (picture size: 1280*720), and annotated. Among the 100,000 pictures, there are pictures of different weather, scenes, and time, and there are high-definition and blurred pictures, which have the characteristics of large scale and variety.

7. ApolloCar3D Dataset

➤ Two datasets for autonomous driving

The dataset contains 5,277 driving images and over 60K car instances, where each car is equipped with an industry-grade 3D CAD model with absolute model dimensions and semantically labeled keypoints. This dataset is more than 20 times larger than PASCAL3D+ and KITTI (state of the art).

8. Argoverse Dataset

The Argoverse dataset is a dataset released by Argo AI, Carnegie Mellon University, and Georgia Institute of Technology to support research on 3D Tracking and Motion Forecasting for autonomous vehicles. The dataset consists of two parts: Argoverse 3D Tracking and Argoverse Motion Forecasting.

9. H3D-HRI-US Dataset

Honda Research Institute released its Autonomous Driving Orientation Dataset in March 2019, a large-scale full-surround 3D multi-object detection and tracking dataset collected using 3D LiDAR scanners. It contains 160 crowded and highly interactive traffic scenes with a total of 1 million labeled instances in 27,721 frames. With unique dataset size, rich annotations, and complex scenes, H3D comes together to inspire research on full-surround 3D multi-object detection and tracking.

10. Lyft Dataset

The Lyft dataset is currently the largest transportation agent dataset. This dataset includes motion logs of cars, cyclists, pedestrians, and other traffic agents encountered by autonomous fleets and is ideal for training motion prediction models. Specifically include: hourly traffic agent movement. (1000+); data from 23 vehicles (16K); semantic graph annotations (15K).

In the era of deep integration of data and artificial intelligence, the richness and quality of datasets will directly determine how far an AI technology goes. In the future, the effective use of data will drive innovation and bring more growth and value to all walks of life. With the help of automatic labeling tools, GAN or data augment technology, we can improve the efficiency of data annotation and reduce labor costs.

Previous

Previous Next

Next